We introduce entity triggers, an effective proxy of human explanaations for facilitating label-efficient learning of NER models. We crowd-sourced 14k entity triggers for two well-studied NER datasets.

- Trigger Dataset: We introduce the concept of “entity triggers,” a novel form of explanatory annotation for named entity recognition problems. We crowd-source and publicly release 14k annotated entity triggers on two popular datasets: CoNLL03 (generic domain), BC5CDR (biomedical domain).

- Novel learning framework: We propose a novel learning framework, named Trigger Matching Network, which encodes entity triggers and softly grounds them on unlabeled sentences to increase the effectiveness of the base entity tagger

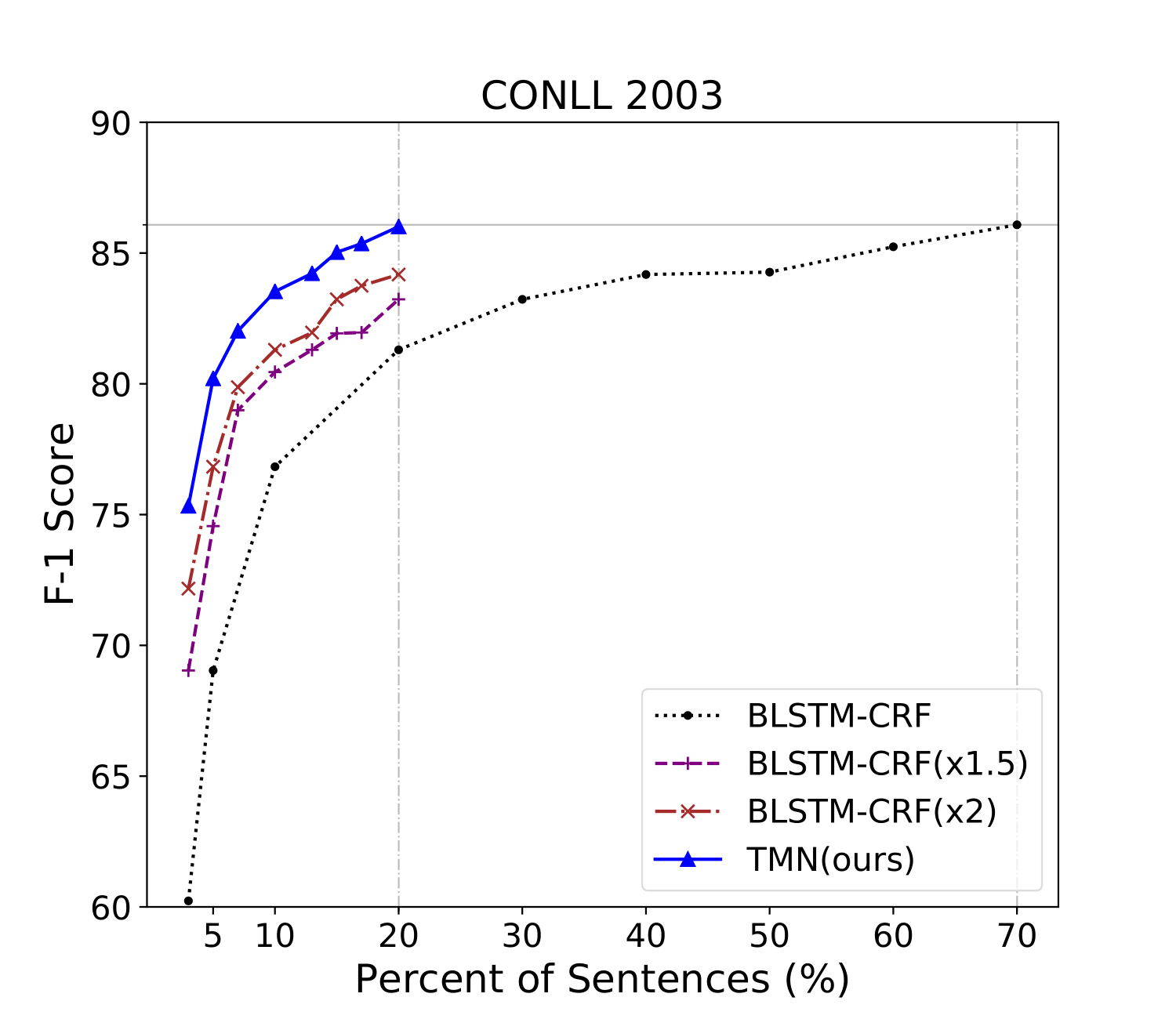

- NER Performance Improvement: Experimental results show that the proposed trigger-based framework is significantly more cost-effective. The TMN uses 20% of the trigger-annotated sentences from the original CoNLL03 dataset, while achieving a comparable performance to the conventional model using 70% of the annotated sentences.

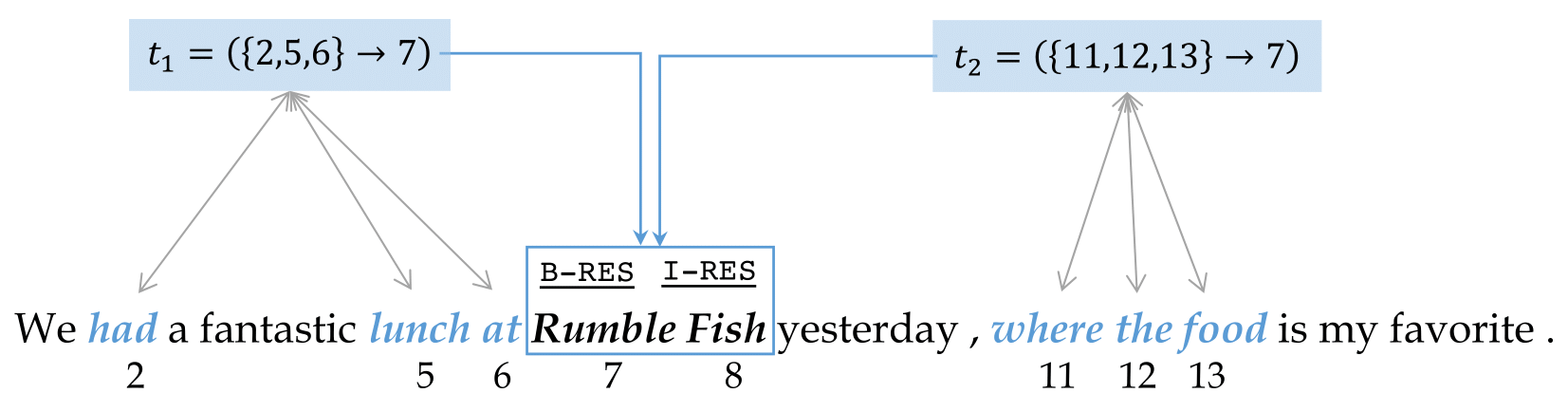

We show two individual entity triggers: t1 (“had ... lunch at”) and t2 (“where the food”). Both are associated to the same entity mention “Rumble Fish” (starting from 7th token) typed as restaurant (RES).

We stretch the curve of BLSTM-CRF parallel to the x-axis by 1.5/2. Even if we assume annotating entity triggers cost 150/200% the amount of human effort as annotating entities only, TMN is still much more effective.

DatasetExplore more!

Trigger Matching Networks

Trigger Matching Networks (TMN) has two-stage of training and inference:

Interpretability

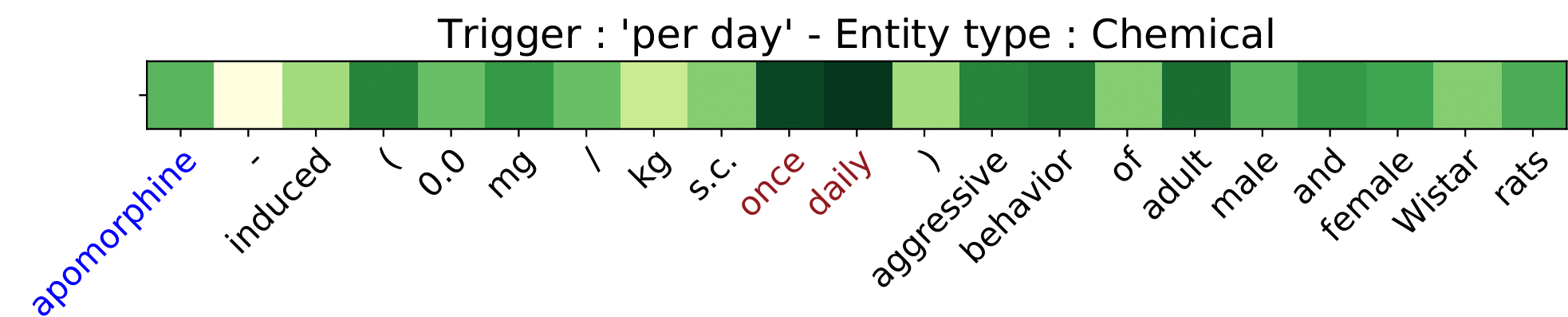

illustrating that the trigger attention scores help the TMN model recognize entities.

The training data has ‘per day’ as a trigger phrase for chemical type entities, and this trigger matches the phrase ‘once daily’ in an unseen sentence during the inference phase of TrigMatcher.

This result not only supports our argument that trigger-enhanced models such as TMN can effectively learn, but they also demonstrate that trigger-enhanced models can

provide reasonable interpretation, something that lacks in other neural NER models.

To cite us

@inproceedings{lin-etal-2020-triggerner,

title = "{T}rigger{NER}: Learning with Entity Triggers as Explanations for Named Entity Recognition",

author = "Lin, Bill Yuchen and

Lee, Dong-Ho and

Shen, Ming and

Moreno, Ryan and

Huang, Xiao and

Shiralkar, Prashant and

Ren, Xiang",

booktitle = "Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics",

month = jul,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2020.acl-main.752",

pages = "8503--8511",}